The jury assigned Meta $4.2 million in damages and Google $1.8 million, amounts relatively small for these major corporations, each spending over $100 billion annually on capital expenses.

This trial in Los Angeles serves as a test case for thousands of similar suits consolidated within California state courts.

‘ACCOUNTABILITY HAS ARRIVED’

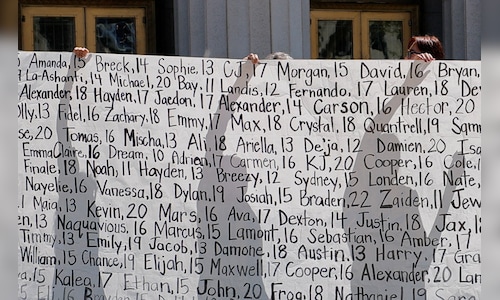

The case features a 20-year-old woman, referred to as Kaley in court, who was a minor when the lawsuit initiated. She testified that she became addicted to Google’s YouTube and Meta‘s Instagram at a young age due to their captivating designs, such as the “infinite scroll” feature that encourages prolonged usage.

The jury held Google and Meta accountable for negligence in the design of both applications and their failure to warn users about potential risks.

“Today’s verdict is a referendum – from a jury, to an entire industry – that accountability has arrived,” stated the lead counsel for the plaintiff.

Representatives from Meta and Google expressed disagreement with the ruling and indicated plans to appeal.

Shares of Meta rose by 0.3%, while Google’s parent company Alphabet increased by 0.2%.

US law provides strong protection for social media companies against liability for content on their platforms; however, the plaintiff in this case focused on design choices rather than content.

According to analyst Gil Luria from investment firm D.A. Davidson, the verdict poses a “setback” for Meta and Google.

“This process could extend through further cases and appeals, but might eventually compel these companies to introduce measures aimed at consumer safety, potentially hindering growth,” he remarked.

Snap and TikTok were also involved in the trial but settled with the plaintiff before proceedings began; details of those agreements were not disclosed.

MOUNTING CRITICISM

In the last decade, large technology firms in the U.S. have faced increasing criticism regarding child and teen safety, with discussions now escalating into legal and governmental arenas. The US Congress has yet to enact comprehensive social media legislation.

Last year, at least 20 states introduced laws pertaining to social media use among children, as reported by the non-partisan National Conference of State Legislatures.

This legislation includes regulations on cellphone usage in schools and mandates age verification for social media account registration. NetChoice, a trade organization supported by tech companies like Meta and Google, is challenging age verification laws in court.

Following the verdict, US senators Marsha Blackburn (Republican) and Richard Blumenthal (Democrat) urged Congress to pass laws requiring social media companies to prioritize children’s safety in their platform designs.

A separate case regarding social media addiction, presented by various states and school districts against tech companies, is scheduled for trial this summer in federal court in Oakland, California.

Another state trial is anticipated to start in Los Angeles in July, as mentioned by attorney Matthew Bergman, one of the leading representatives for the plaintiffs. This case will address Instagram, YouTube, TikTok, and Snapchat.

In a separate matter, a jury in New Mexico ruled on Tuesday that Meta violated state law in a lawsuit initiated by the state’s attorney general, who accused the company of misleading users about the safety of Facebook, Instagram, and WhatsApp while facilitating child sexual exploitation on these platforms.

TRIAL ARGUMENTS

During the trial, the plaintiff’s attorneys aimed to demonstrate that Meta and Google deliberately targeted children, prioritizing profit over safety. Meta‘s legal representatives highlighted the plaintiff’s challenging upbringing as a significant factor in her mental health issues, while YouTube contended that her usage of its platform was minimal.

Jurors reviewed internal documents showing how Meta and Google actively sought to attract younger users, and they heard testimonies from executives, including Meta CEO Mark Zuckerberg, who defended the company’s strategic decisions.

When questioned about Meta‘s choice to remove a temporary ban on beauty filters that some within the company warned could be detrimental to adolescent girls, Zuckerberg stated that he believed users should be able to express themselves freely.

“I felt like the evidence wasn’t clear enough to support limiting people’s expression,” he remarked.

Discussions around free speech and content moderation will likely play a significant role in the companies’ defense.

Also Read: Elon Musk’s xAI plans ‘doubling down’ on AI videos after OpenAI nixes Sora